This afternoon I opened a writing tool. Before I could type anything, it searched 1,482 markdown files. It already knew me.

Andrej Karpathy put out a gist called LLM Wiki. Personal knowledge base, all markdown, maintained by an AI. The wiki accumulates. You don’t rediscover the same thing every time. Search gets a mention near the end, filed under optional tools. Something you bolt on when the wiki gets big enough.

I think that’s backwards. But I’ll get to it.

Morning. Coffee in hand, scrolling a feed.

A thirty-minute video shows up. The kind where you’d learn something if you sat through the whole thing. Ten minutes in, I know it’s real.

But I have to start work. Or drive the kid to school. Or whatever the morning has lined up.

So I drop the link into a playlist. Walk away.

You know the Watch Later playlist. People call it a graveyard, but that gets it backwards. Graveyards are buried. Nobody thinks about a graveyard. These videos hang around your neck like an albatross. I should watch that. I’ll get to it this weekend. You carry the rope all day. Saturday you don’t watch. Sunday you don’t watch. Monday a new one arrives.

A cron job walks the playlist every few hours. Something new appears, an agent picks it up. Pulls the transcript with yt-dlp. Reads the whole thing. Searches my notes for anything connected. Past videos on similar topics, articles that pulled at the same thread.

Then it writes the note. Title, source, the argument the video is making, the evidence, why it matters to me specifically. A section called Agent Take where it pushes back or sharpens the case.

The note lands as a markdown file in my Obsidian vault. Two minutes after I dropped the link. By the time I finish my coffee, the knowledge from that video is sitting in my corpus. Structured and cross-referenced. Reachable by the search layer.

I never go back. The video, in the form that matters to me, has already been read. The system cuts the rope.

Same thing, three more ways.

Articles. I drop a URL into a Discord channel. Blog post, paper, tool page. The agent figures out the content type, pulls it down, runs the same template. Note lands in the same vault.

Personal stuff. Something happens. A story I want to keep, a memory that surfaced, something I learned about one of my kids. I message Coach on Telegram and tell her. She asks the follow-up questions. Distills it to what’s meaningful. Saves it. Eventually it lands in the EBKB, the curated biographical knowledge base, through a pipeline I wrote about earlier.

The fourth route took me the longest to see. Coach has her own memory. Files she keeps from our coaching sessions. Every so often she walks through those files and checks them against the EBKB. Anything stable that’s missing, she adds. The wiki grows from using the system, not from me sitting down to do intake.

Four routes. One vault. All of it markdown.

Karpathy mentions QMD, a local search engine for markdown, near the end of that gist. Tucked into optional CLI tools. He writes, at small scale the index file is enough, but as the wiki grows you want proper search.

He’s got it in the wrong place.

For personal content, for biographical content, search is not a scale-up concern. It is the cold-start thing. The agent doesn’t know what to look for at session zero. An index file gives it a table of contents. Not a sense of what is relevant to a conversation that hasn’t happened yet.

QMD runs hybrid. BM25 plus vector plus LLM rerank, across three collections. Bookmarks, my Obsidian vault, the EBKB. 1,482 documents. When the writing partner reaches for “stories about leadership under pressure,” it surfaces a tugboat captain from twenty years ago that nobody tagged that way. The connection shows up at query time. The search layer is the connective tissue. Makes tagging pointless.

Other people are building here. Rowboat extracts typed entities from meetings and emails. ex-brain tracks business facts through hybrid search. Both real systems. Both good. Neither one targets personal identity content. Neither one makes search the foundation, which I think it has to be when the corpus is you.

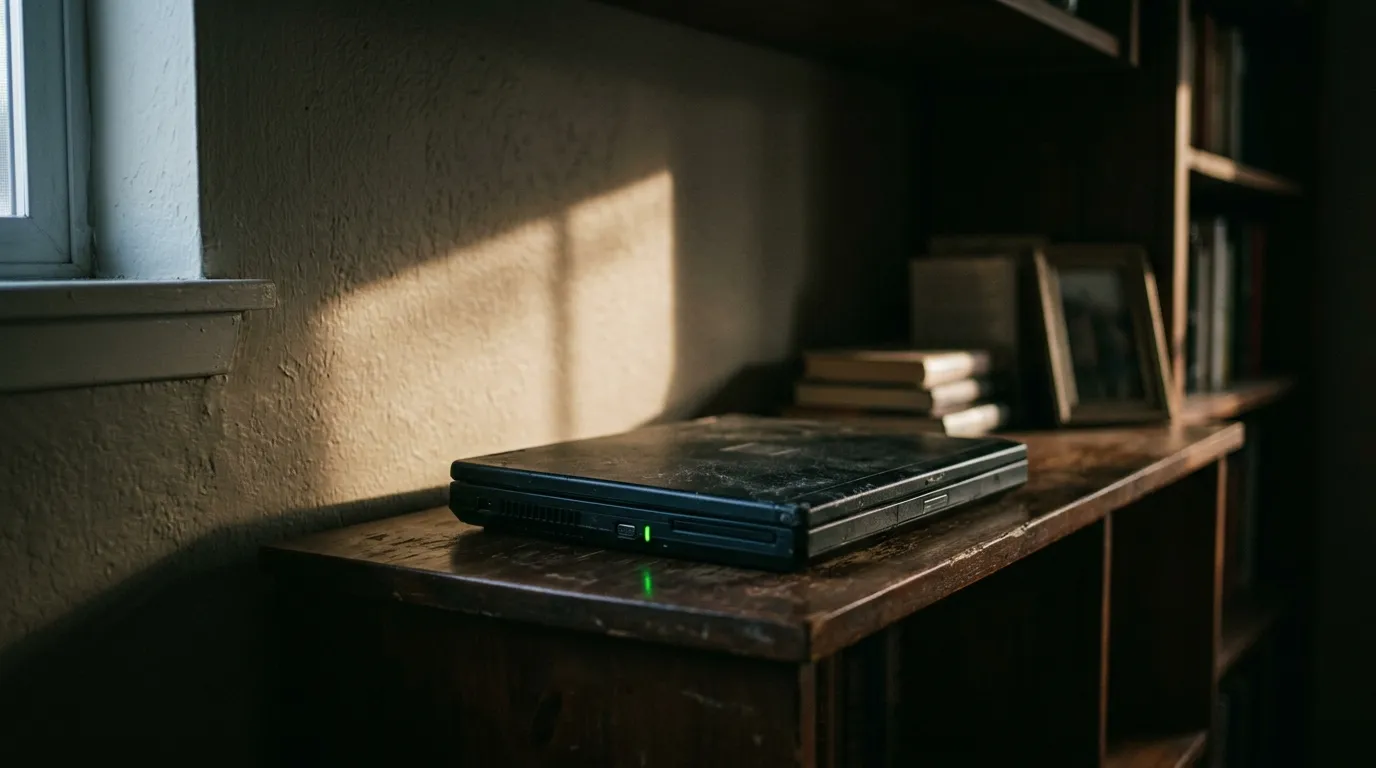

The whole thing runs on a four-year-old gaming laptop. GTX 1650 Ti. Four gigs of VRAM. Always on, in a corner of my office. QMD serves the search layer over MCP. Agents tunnel in from everywhere. My Windows dev box, this laptop, whichever machine I’m working from.

Nothing leaves the LAN. The biographical content lives on hardware I own. Privacy here is not a checkbox in a SaaS dashboard. It is the physical fact of where the data sits.

The laptop cost about two hundred dollars, used. No monthly bill. No managed service. The infrastructure for a system this personal was already in my closet. It just needed something to do.

Karpathy left the gist abstract on purpose. Go build your own version. The wiki is where things end up. The system that keeps filling it, that’s the work.

This afternoon, when I opened that tool and it already knew me, four pipelines had been doing the work. A gaming laptop in the corner, running. That was the whole thing.

Technical details in the companion gist. Intake pipelines, QMD config, hardware setup.

Erik Benjaminson is founder of Sapient Technology Group, an applied AI lab where he designs and builds production multi-agent systems. SapientTech.dev